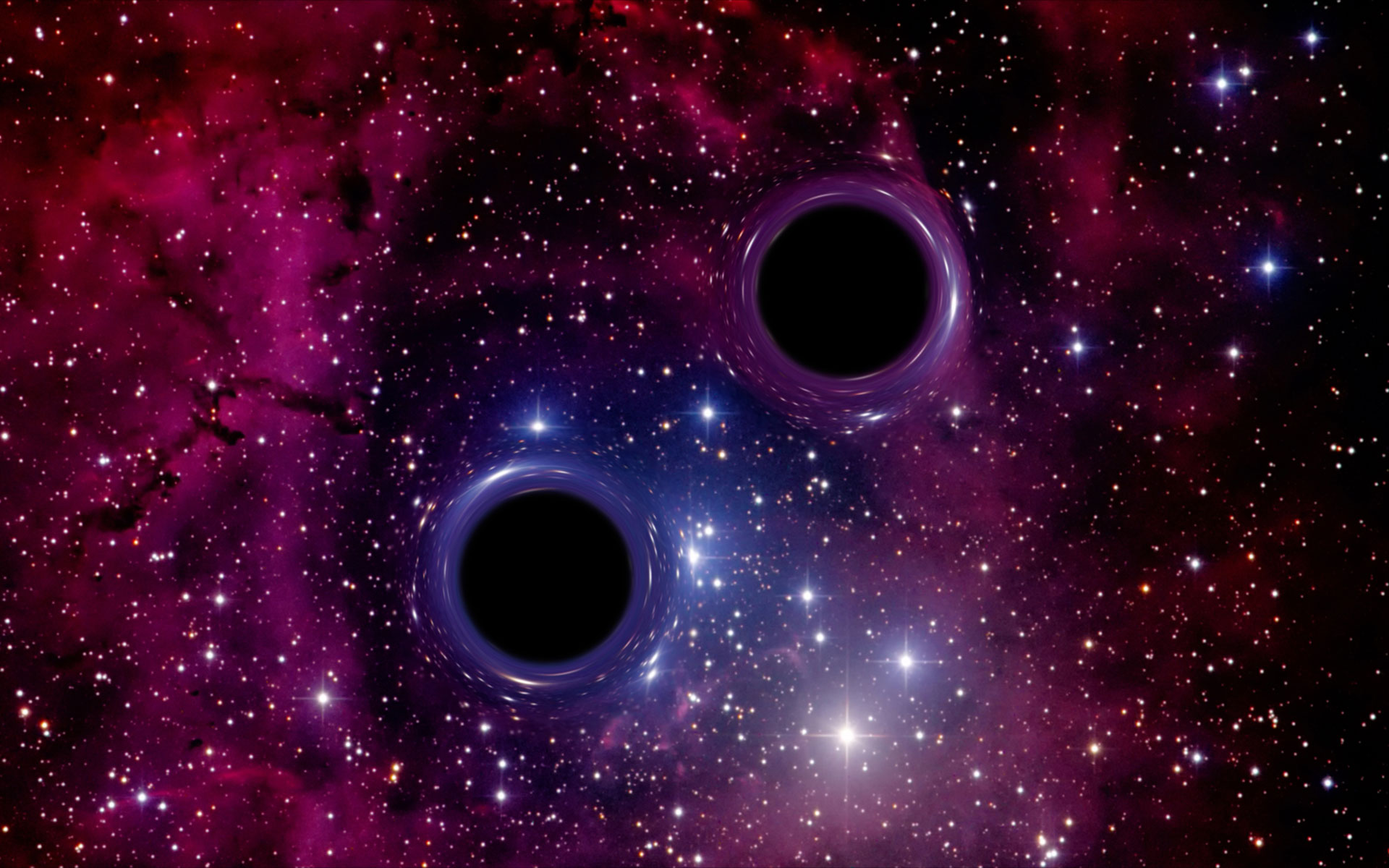

Black holes are one of the greatest mysteries of our Universe – for example a black hole with the mass of our Sun has a radius of only 3 kilometers. Black holes in orbit around each other give off gravitational radiation – oscillations of space and time predicted by Albert Einstein in 1916. This causes the orbit to become faster and tighter, and eventually the black holes merge in a final burst of radiation. These gravitational waves propagate through the Universe at the speed of light, and are detected by observatories in the USA (LIGO) and Italy (Virgo). Scientists compare the data collected by the observatories against theoretical predictions to estimate the properties of the source, including how large the black holes are and how fast they are spinning. Currently, this procedure takes at least hours, often months.

An interdisciplinary team of researchers is using state-of-the-art machine learning methods to speed up this process. The team includes scientists from the Max Planck Institute for Intelligent Systems (MPI-IS) in Tübingen, the Max Planck Institute for Gravitational Physics (Albert Einstein Institute/AEI) in Potsdam, the University of Tübingen and the University of Maryland. They developed an algorithm using a deep neural network, a complex computer code built from a sequence of simpler operations, inspired by the human brain. Within seconds, the system infers all properties of the binary black-hole source. Their research results have recently been published in the flagship journal of Physics, Physical Review Letters.

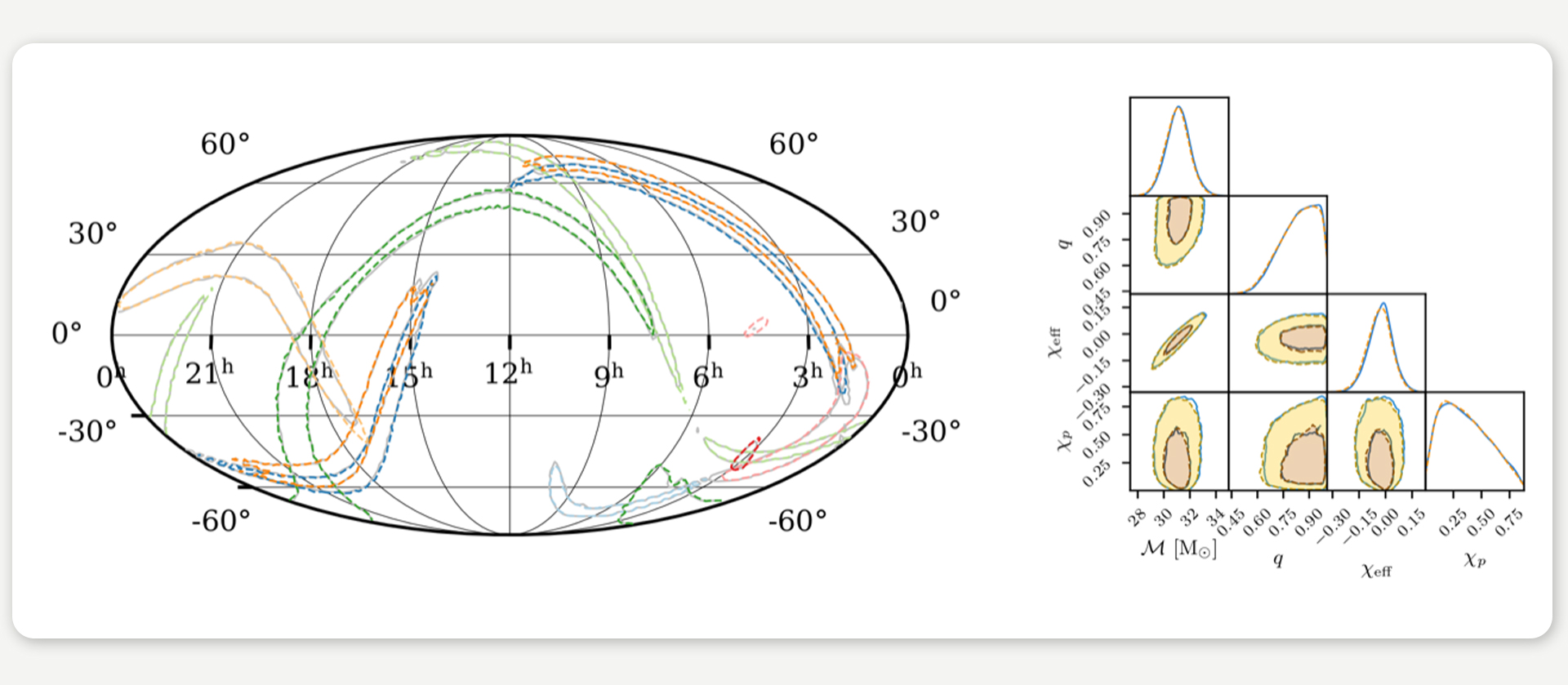

FIGURES / The new machine-learning algorithm accurately estimates all parameters characterizing a binary black hole source in only a few seconds. The figure on the left shows the sky positions inferred for eight events from the first and second LIGO/Virgo observing run. This compares the estimate using machine learning (colored) to the much slower standard method (gray). On the right, we show four inferred parameters (chirp mass – the effective mass of the binary system, mass ratio, and two spin parameters) for GW150914 (machine learning in orange, standard approach in blue). © M. Dax, S. R. Green, J. Gair, J. H. Macke, A. Buonanno, B. Schölkopf

Neural network analyses gravitational waves in real-time

“Our method can make very accurate statements in a few seconds about how big and massive the two black holes were that generated the gravitational waves when they merged. How fast do the black holes rotate, how far away are they from Earth and from which direction is the gravitational wave coming? We can deduce all this from the observed data and even make statements about the accuracy of this calculation,” explains Maximilian Dax, first author of the study Real-Time Gravitational Wave Science with Neural Posterior Estimation and Ph.D. student in the Empirical Inference Department at MPI-IS.

The researchers trained the neural network with many simulations – predicted gravitational-wave signals for hypothetical binary black-hole systems combined with noise from the detectors. This way, the network learns the correlations between the measured gravitational-wave data and the parameters characterizing the underlying black-hole system. It takes ten days for the algorithm called DINGO (the abbreviation stands for Deep INference for Gravitational-wave Observations) to learn. Then it is ready for use: the network deduces the size, the spins, and all other parameters describing the black holes from data of newly observed gravitational waves in just a few seconds. The high-precision analysis decodes ripples in space-time almost in real-time – something that has never been done with such speed and precision. The researchers are convinced that the improved performance of the neural network as well as its ability to better handle noise fluctuations in the detectors will make this method a very useful tool for future gravitational-wave observations.

Simulation-based inference could be transformative in many areas of science

“The further we look into space through increasingly sensitive detectors, the more gravitational-wave signals are detected. Fast methods such as ours are essential for analyzing all of this data in a reasonable amount of time,” says Stephen Green, senior scientist in the Astrophysical and Cosmological Relativity department at the AEI. “DINGO has the advantage that – once trained – it can analyze new events very quickly. Importantly, it also provides detailed uncertainty estimates on parameters, which have been hard to produce in the past using machine-learning methods.” Until now, researchers in the LIGO and Virgo collaborations have used computationally very time-consuming algorithms to analyze the data. They need millions of new simulations of gravitational waveforms for the interpretation of each measurement, which leads to computing times of several hours to months – DINGO avoids this overhead because a trained network does not need any further simulations for analyzing newly observed data, a process known as ‘amortized inference’.

The method holds promise for more complex gravitational-wave signals describing binary–black-hole configurations, whose use in current algorithms makes analyses very time-consuming, and for binary neutron stars. Whereas the collision of black holes releases energy exclusively in the form of gravitational waves, merging neutron stars also emit radiation in the electromagnetic spectrum. They are therefore also visible to telescopes which have to be pointed to the respective region of the sky as quickly as possible in order to observe the event. To do this, one needs to very quickly determine where the gravitational wave is coming from, as facilitated by the new machine learning method. In the future, this information could be used to point telescopes in time to observe electromagnetic signals from the collisions of neutron stars, and of a neutron star with a black hole.

Alessandra Buonanno, director at the AEI, and Bernhard Schölkopf, director at the MPI-IS, are thrilled with the prospect of taking their successful collaboration to the next level. Buonanno expects that “going forward, these approaches will also enable a much more realistic treatment of the detector noise and of the gravitational signals than is possible today using standard techniques,” and Schölkopf adds that such “simulation-based inference using machine learning could be transformative in many areas of science where we need to infer a complex model from noisy observations.”

Text: Linda Behringer, Maximilian Dax, Elke Müller

Cover illustration: Binary black hole system. © iStock.com/brightstars/gmutlu

This blog post is based on a press release of the Max Planck Institute for Intelligent Systems (MPI-IS) in Tübingen, a partner institution of our Cluster of Excellence, and the Max Planck Institute for Gravitational Physics (Albert Einstein Institute/AEI) in Potsdam.

Identifying Models in

Neuroscience with Machine Learning

Comments